In the previous post, we moved from structural parsing to semantic interpretation of the Device Tree, allowing our kernel to dynamically discover peripheral addresses and interrupt lines. But while we have successfully purged hardcoded addresses from the assembly in favor of dynamic discovery, we are still operating in a flat, unprotected physical address space.

To build a truly isolated environment, we need to take those discovered physical locations and map them into a structured virtual layout. This brings us to Virtual Memory.

Since I’ve already written an extensive series on the core mechanics of Virtual Memory, I’m not going to retread that ground here. If you need a refresher on the basics, I highly recommend circling back to those original posts first:

- All about virtual memory I – Motivation

- All about virtual memory II – Operation

- All about virtual memory III – Practical Example

While the high-level concepts remain the same, the implementation details shift significantly when you move from the territory of x86 to the world of AArch64. From the way the hardware handles table walks to the specific bit-level attributes of the descriptors, ARMv8-A introduces its own set of rules under the Virtual Memory System Architecture (VMSA).

In this post, we aren’t just looking at abstract theory. We are building the architectural bridge required to enable the MMU without crashing the system. We will explore the “why” behind our design decisions: from establishing an Identity Mapping to ensure a seamless transition, to configuring the Memory Attribute Indirection Register (MAIR) to manage memory types like nGnRE for the peripherals.

The Transition to Virtual Memory

Up to this point, our kernel has operated in a flat physical address space. When the CPU fetches an instruction from an address like 0x40008000, it is accessing that exact offset in RAM. We are now moving away from this direct mapping by enabling the Memory Management Unit (MMU), which introduces a translation layer that decouples the addresses used by the processor from their physical locations in memory.

To enable it, we have to interact with the MMU. However, transitioning from physical to virtual addressing isn’t as simple as flipping a switch and moving on. It creates a temporary “coherence” problem that we need to solve before we can execute a single line of virtual code.

In AArch64, the MMU is enabled by setting the M bit in the SCTLR_EL1 register. However, the hardware reality of this transition is complex because the processor does not execute instructions in a linear vacuum. Pipelining and speculative execution are the architectural norm; the CPU is perpetually fetching, decoding, and predicting instructions several cycles ahead of the current execution point. When the MMU is toggled, it doesn’t just affect the “next” instruction, it affects the entire state of the pre-fetch engine and the instructions already living in the pipeline.

- Fetch: The CPU fetches the instruction to enable the MMU from a physical address (e.g.

0x40001000). - Speculation: While that instruction is still being decoded, the CPU might already be speculatively fetching the next instructions at

0x40001004or0x40001008. - Execution: The MMU bit is set.

- The Reality Check: Suddenly, the “guesses” the CPU made a few cycles ago must now be translated through the MMU.

The moment the MMU is enabled, the hardware fundamentally changes how it interprets the Program Counter (PC). What was previously a direct physical address is now treated as a Virtual Address that must be translated through the page tables.

If the address currently being executed does not have a valid translation defined, the CPU’s fetch stage will fail immediately. This creates a critical discontinuity: the processor’s pipeline is already filled with instructions from the physical world, but it can no longer resolve the physical location of the next instruction. Because the hardware cannot reconcile its internal state with the new translation rules, the execution flow snaps, typically resulting in an immediate Instruction Abort or a complete system hang.

Defining the Identity Map

This is where the Identity Mapping comes in. Instead of jumping straight into a complex virtual layout (like moving the kernel to the high half of memory), we first create a 1:1 mapping. By ensuring the virtual and physical worlds overlap perfectly during this phase, we allow the CPU’s pipeline and speculation engine to continue running smoothly. The “meaning” of the PC stays the same before and after the MMU is turned on, giving us the stability needed to execute our first truly “virtual” instructions. This state is a safe zone to perform the transition before we eventually move the kernel to its final location.

VMSA: The Geometry of Memory

Before we can write our first Translation Table entry in Rust, we have to define the configuration parameters. In AArch64, the VMSA is highly configurable. We must tell the hardware exactly how large our virtual address space is and what granule (page size) we intend to use. For this kernel, I’ve settled on a 48-bit Virtual Address space using 4 KiB granules.

While AArch64 allows for 16 KiB or 64 KiB granules, 4 KiB is the industry standard. Most drivers, allocators, and hardware assumptions in the broader ecosystem are built around this size. Furthermore, 4 KiB granules are supported by almost every AArch64 implementation, ensuring our kernel remains highly portable.

The choice of granule is not just about the size of a single page; it defines the entire structure and “depth” of the translation walk. For a 48-bit address space using a 4 KiB granule, the hardware divides the Virtual Address (VA) into four distinct levels. Each level uses 9 bits of the Virtual Address to index into a 512-entry table.

| Level | Size per Entry | Bits used to index |

0 | 512 GiB | 47:39 |

1 | 1 GiB | 38:30 |

2 | 2 MiB | 29:21 |

3 | 4 KiB | 20:12 |

A 48-bit address space provides a total of 256 TiB of virtual memory. While we can technically scale up to 52 bits using the Large Virtual Address extension, 48 bits is more than enough for any modern workload.

Although the kernel is currently locked to 48 bits, the long-term goal is to move these parameters into a compile-time configuration. This will allow the kernel to be easily adapted for 52-bit addressing or other configurations as system requirements evolve, without requiring a fundamental rewrite of the memory management logic.

The L1 Block Shortcut

In a full production-ready OS, these parameters would lead to a 4-level table walk (L0 to L3). However, for our immediate goal (the Identity Map), we don’t need that level of complexity yet.

One of the most powerful features of the AArch64 translation regime is the ability to use Block Descriptors (Hugepages) at the L1 and L2 levels. Instead of pointing to a subsequent table, an L1 entry can be a “terminal” descriptor that maps a contiguous 1 GiB block of memory in a single go. By utilizing these 1 GiB blocks for our Identity Map:

- We significantly reduce pressure on the TLB during the critical transition phase.

- We map our entire kernel and peripheral space with just a couple of entries.

- We keep our early boot code simple, avoiding the need for a complex page allocator.

Memory Attributes and Policies

Mapping a range of addresses is only half the battle. We also need to tell the CPU how to behave when it accesses those addresses. Is it safe to cache this data? Can the CPU reorder these writes to make them faster? Can it speculatively read ahead?

In AArch64, these details are not stored directly into the PTEs as they often are in x86, instead we use the MAIR_EL1 register. Think of MAIR as a color palette. We define up to 8 different “flavors” (attributes) in this register, and then each entry in our translation table simply stores an index (0–7) to choose which flavor to use.

For our initial Identity Map, we only need two specific flavors: Device Memory and Normal Memory

Device Memory

One slot will be reserved for device memory. This kind of memory is special because accesses have to be done following a non Gathering, no Reordering, and Early Write Acknowledgement policy (nGnRE).

Gathering

Gathering allows the hardware to merge multiple accesses to the same or adjacent addresses into a single bus transaction. If the memory is marked as non-Gathering then each access must be performed individually, exactly as the instruction specified. This is important for device registers where reading 4 bytes from offset 0x00 and 4 bytes from offset 0x04 might trigger different side effects in the hardware.

G (Gathering): CPU: ldr w0, [x1] ; 4 bytes from 0x100 CPU: ldr w1, [x1, #4] ; 4 bytes from 0x104 Bus: ──── 8-byte read from 0x100 ──── (merged) nG (Non-gathering): CPU: ldr w0, [x1] ; 4 bytes from 0x100 CPU: ldr w1, [x1, #4] ; 4 bytes from 0x104 Bus: ── 4B read 0x100 ── ── 4B read 0x104 ── (separate)

Reordering

Reordering allows the memory subsystem to issue requests in whatever order is most efficient, not the program order.

R (Reordering allowed):

Program order: ldr w0, [status_reg] ; read status

ldr w1, [data_reg] ; read data

Bus might do: read data_reg ──> read status_reg (reordered)

nR (No reordering):

Bus must do: read status_reg ──> read data_reg (program order)

For normal memory (RAM) this is fine – reading address A before address B gives the same result, regardless of order. But with device memory this is not correct. In the example above, reading data_reg first without ensuring the device is ready (status_reg) could cause incoherencies.

Early Write Acknowledgement

This policy defines when the write is acknowledged to the CPU. If this property is active, data will be acknowledged as soon as it reaches any intermediate buffer (e.g. a write buffer between the CPU and the bus). The CPU can now move on immediately, even though the data hasn’t reached the destination yet. On the contrary, if this property is disabled, the write is only acknowledged back to the CPU once it has reached the final destination – CPU stalls until the data physically arrives.

E (Early write acknowledgement):

CPU write ──> [write buffer] ──> [bus] ──> [device register]

│

└── ACK back to CPU (early)

CPU continues immediately, data still in transit

nE (No early write acknowledgement):

CPU write ──> [write buffer] ──> [bus] ──> [device register]

│

└── ACK back to CPU

CPU waits until device actually receives the data

Normal Memory

Normal memory has a much more relaxed set of restrictions compared to device memory. Since the CPU knows that accessing RAM doesn’t trigger hardware side effects, it can use features like caching or speculative pre-fetching to improve performance.

For this kind of memory, we define attributes for two “domains”: Inner and Outer.

- Inner Domain: Usually refers to the closest cache levels to the core (

L1andL2). - Outer Domain: Usually refers to the last-level cache (

L3) or the system-level interconnect before reaching the physical RAM.

The policy we will use for our kernel RAM is Write-Back Cacheable, Inner Shareable and Non-transient. Let’s break down what these terms mean:

Write-Back

The processor does not write the modified cache line back to main memory immediately. Instead, the cache line is marked as dirty. The actual write to RAM is deferred until the cache line needs to be replaced (evicted) to make room for new data.

WB (Write-Back):

CPU write ──> [ Cache (Mark Dirty) ] (Fast / Immediate)

│

[ RAM ] <────────────┘ (Deferred / Only on Eviction)

RAM is NOT updated until the cache line is kicked out.

Write-Through

In contrast, Write-Through ensures that data is written to both the cache and the main memory at the same time. While this improves data consistency between RAM and Cache, it forces the CPU to wait for the slower memory bus.

WT (Write-Through):

CPU write ──> [ Cache ]

└───> [ RAM ] (Simultaneous)

CPU stalls until both acknowledge the write.

Cacheable

Determines whether the CPU is allowed to keep a local copy of a memory location in its caches.

Shareable

Defines the observers that the hardware must keep in sync. If memory is Cacheable, you have multiple copies of the same data (one in RAM, one in Core 0's cache, one in Core 1's cache, etc). Shareability is the set of rules that forces those copies to stay identical. If Core 0 modifies a "Cacheable + Inner Shareable" address, the hardware automatically sends a signal to Core 1, Core 2, and Core 3 to update or invalidate their local copies.

Transient

Is a hint to the hardware about the temporal locality of the data. If the memory is marked as transient, we want to express that this data will be used once or at least for a very short window, so the cache controller might decide to not allocate a cache line for that data, or might mark it for immediate eviction.

On the other hand, Non-Transient hints to the CPU that this data is "standard". We want it to remain in the cache according to standard eviction rules, rather than being treated as throwaway data that should be evicted quickly to save space.

Identity Mapping: Structure and Activation

So far, we have set the foundations to understand the different roles memory plays in our system. Now it’s time to put this into practice and translate these concepts into code.

MAIR Ranges

The first step is to configure the MAIR_EL1 register. We will define two slots (indices): one for Device Memory and one for Normal Memory.

/* MAIR_EL1 memory attribute encodings */

pub const MAIR_DEVICE_NGNRNE: u64 = 0x00;

pub const MAIR_NORMAL_WB: u64 = 0xFF;

/* MAIR_EL1 slot indices (used in AttrIndx field of block/page descriptors) */

pub const MAIR_IDX_DEVICE: usize = 0;

pub const MAIR_IDX_NORMAL_WB: usize = 2;

#[inline(always)]

fn configure_mair_range(conf: u64, range: usize) {

let conf_shifted = conf << (range * 8);

unsafe {

asm!(

"mrs {tmp}, mair_el1",

"orr {tmp}, {tmp}, {conf}",

"msr mair_el1, {tmp}",

"isb sy",

conf = in(reg) conf_shifted,

tmp = out(reg) _,

options(nostack, nomem, preserves_flags)

);

}

}

pub fn setup_mair_ranges() {

// Device: non-Gathering, non-Reordering, no-EarlyWriteACK

configure_mair_range(MAIR_DEVICE_NGNRNE, MAIR_IDX_DEVICE);

// Normal cacheable: write-back cacheable, inner shareable

configure_mair_range(MAIR_NORMAL_WB, MAIR_IDX_NORMAL_WB);

}

Identity Mapping

Our next step is to set up the identity mapping. As stated before, this is a temporal mapping that is not focused on performance or security. To keep it simple but architecturally sound, we’ll use two levels: a L0 table pointing to a L1 table.

Descriptor Format

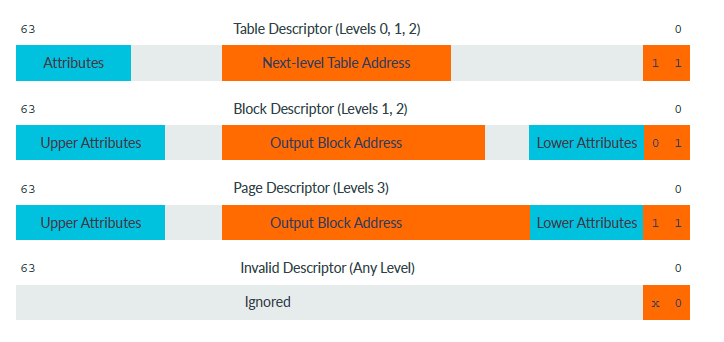

In AArch64, every entry in a translation table is a 64-bit value. However, the hardware interprets these 64 bits differently depending on which level of the table we are in and which bits are set.

As you can see in the diagram above, the two least significant bits are the "type" bits that tell the MMU what it’s looking at:

- Invalid (0b00): The CPU will trigger a Translation Fault if it accesses this range.

- Block (0b01): This entry points directly to a large block of memory (1 GiB at Level 1).

- Table (0b11): This entry points to the physical address of the next level of tables.

Populating the Table

In many Rust projects, you might see a static mut array, but for a kernel, we want tighter control over where this table lives. In our case, the memory for the table is defined externally (linker.lds) and we access it via an extern symbol.

unsafe extern "C" {

static mut __idmap_l0: u8;

static mut __idmap_l1: u8;

}

Once we have the addresses where the L0 and L1 tables will reside, the next step is to configure them. We will start with L0.

For this one, we only mark it as a Table Descriptor, set the next level table address and set up the following attributes:

- APTABLE0 ([61]) = 0x1: Controls which exception levels can read and write a memory region. In this case we follow the same principle:

EL0access is entirely denied. - UXN ([60]) = 0x1: Propagates Unprivileged Execute-Never to all pages in the subtree. No read, no write no execute for userspace.

Even though I said I wouldn't be focusing on security for now, I believe is a good practice to establish from the foundation what can be done by each exception level.

/* Table Descriptor bits */

pub const TABLE_APTABLE0: u64 = 1 << 61;

pub const TABLE_UXNTABLE: u64 = 1 << 60;

let idmap_l0_ptr = addr_of_mut!(__idmap_l0) as *mut u8;

// 1. Mark the entry as Table Descriptor, it will cover 512 GiB

mark_table_desc(idmap_l0_ptr as *mut Pte);

// 2. Set attributes

set_table_attrs(idmap_l0_ptr as *mut Pte, TABLE_UXNTABLE | TABLE_APTABLE0);

let idmap_l1_ptr = addr_of_mut!(__idmap_l1) as *mut u8;

// 3. Set next level entry

set_next_lvl_table_addr(idmap_l0_ptr as *mut Pte, idmap_l1_ptr as *const u64);

Now it's time for L1. This time we will set the output address instead of the next level table address, due to the fact that we are treating it as a Block Descriptor. For the attributes, depending on which MAIR slot we target, we will assign different set of permissions:

Device Memory

- UXN ([54]) = 0x1:

EL0can't fetch instructions from this region. Device memory must not be executable. - PXN ([53]) = 0x1:

EL1can't fetch instructions from this region. - AF ([10]) = 0x1: Must be set to

1. If left as0, first access will generate a translation fault, since we do not have a page fault handler yet. - SH ([9:8]) = 0b00: We do not set these bits since device memory must not be shareable.

- AP ([7:6]) = 0b00: With this configuration we ensure

EL1has read/write access whileEL0has no access. - AttrIndx ([4:2]) = 0x0:

MAIRindex.

Normal Memory

- UXN ([54]) = 0x1:

EL0can't fetch instructions from this region. - PXN ([53]) = 0x0:

EL1can fetch instructions from this region. - AF ([10]) = 0x1: Must be set to

1. If left as0, first access will generate a translation fault, since we do not have a page fault handler yet. - SH ([9:8]) = 0b11: Normal memory must be inner shareable so all the CPUs in the same inner domain see a coherent view.

- AP ([7:6]) = 0b00: With this configuration we ensure

EL1has read/write access whileEL0has no access. - AttrIndx ([4:2]) = 0x2:

MAIRindex.

/* Size constants */

pub const SZ_1G: usize = 1 << 30;

pub const L1_SIZE_PER_ENTRY: usize = 1 << 30;

/* Block/Page Descriptor bits */

pub const DESC_UXN: u64 = 1 << 54;

pub const DESC_PXN: u64 = 1 << 53;

pub const DESC_AF: u64 = 1 << 10;

pub const DESC_SH_INNER: u64 = 0b11 << 8;

pub const DESC_SH_NONE: u64 = 0b00 << 8;

pub const DESC_AP_RW_EL1: u64 = 0b00 << 6;

unsafe extern "C" {

static __kernel_start: u8;

static __stack_top: u8;

static mut __idmap_l0: u8;

static mut __idmap_l1: u8;

}

let kernel_code_start = addr_of!(__kernel_start) as usize;

let kernel_stack_top = addr_of!(__stack_top) as usize;

let kernel_addr_range = kernel_stack_top - kernel_code_start;

// For now we hardcode mmio range

let mmio_addr_range: usize = 0x09000000 - 0x08000000;

let pages = (kernel_addr_range + mmio_addr_range) / L1_SIZE_PER_ENTRY;

...

let ranges: [u64; 2] = [MAIR_IDX_DEVICE as u64, MAIR_IDX_NORMAL_WB as u64];

let mut j = 0;

for i in 0..pages.max(2) {

let off = (idmap_l1_ptr as *mut Pte).offset(i as isize);

// 0. Clear the descriptor

*off = 0;

// 1. Mark as block descriptor

mark_block_desc(off);

// 2. Setup MAIR range

set_mair_range(off, ranges[j]);

// 3. Set attributes

if j == 0 {

// MMIO desc: Non-executable, No-shareability (typical for device)

set_block_attrs(

off,

DESC_UXN | DESC_PXN | DESC_AF | DESC_SH_NONE | DESC_AP_RW_EL1,

);

} else {

// Normal desc: Executable (UXN only), Inner Shareable (Coherent)

set_block_attrs(

off,

DESC_UXN | DESC_AF | DESC_SH_INNER | DESC_AP_RW_EL1

);

}

// 4. Set output address (identity map: VA = PA = i * 1 GiB)

set_next_lvl_table_addr(off, (i * SZ_1G) as *const u64);

if j < 1 {

j += 1;

}

}

Finalizing the Setup: Control Registers

With our tables populated, we need to tell the CPU how to use them. This involves configuring the Translation Control Register (TCR_EL1) and the Translation Table Base Register (TTBR0_EL1).

We start with TTBR0_EL1. This register simply holds the base physical address of our L0 table.

#[inline(always)]

fn load_ttbr0(base: *const u64) {

unsafe {

asm!(

"msr ttbr0_el1, {tmp}",

"isb sy",

"tlbi vmalle1",

"dsb nsh",

"isb sy",

tmp = in(reg) base,

options(nostack, preserves_flags)

);

}

}

Notice that in the last snippet we use Instruction Synchronization Barriers (isb), Data Synchrornization Barriers (dsb) and invalidate all the TLB entries (tlbi) scoped to EL1 translations. This is required because the CPU pipeline and the TLB hardware operate asynchronously from register writes, so without explicit synchronization points the MMU could enable with stale translations or before the new TTBR0 is visible to the hardware.

The first isb sy after we set the address of L0 table, ensures the CPU commits that write before any subsequent instructions execute. Without it, the processor's out-of-order pipeline could speculatively execute instructions past the msr before the register update is visible. Next step is to invalidate all the TLB entries (tlbi vmalle1). We flush it to ensure no stale entries from a previous mapping survive. After that we set a dsb nsh1 to ensure the tlb invalidation has actually completed before we move on. And finally we execute a final isb sy to ensure the pipeline is flushed and the CPU sees a clean state (no prefetched instructions from before the TLB invalidation).

Following with TCR_EL1, this register defines the fundamental parameters of the translation process. We need to configure the following bits:

- IPS ([34:32]) = 0b100: This value controls the size of the physical address. In this case we hardcode it to 44 bits, the value QEMU uses for the Cortex-A57.

- EPD1 ([23]) = 0x1: Disable

TTBR1walks. We do not have the final mapping yet. - TG0 ([15:14]) = 0b00: Sets the granule size for

TTBR0_EL1to 4 KiB. - SH0 ([13:12]) = 0b11: Sets the shareability of the translation table walk to Inner Shareable.

- ORGN0 ([11:10]) = 0b01: Sets the Outer cacheability for the translation table walk itself to Write-Back Read-Allocate. This ensures the MMU can use the cache when reading our descriptors.

- IRGN0 ([9:8]) = 0b01: Sets the Inner cacheability for the translation table walk itself to Write-Back Read-Allocate.

- T0SZ ([5:0]) = 0x10: Defines the size of the memory region addressed by

TTBR0_EL1. The formula is \(2^{64 - T0SZ}\), so a value of 16 gives us a 48-bit address space.

#[inline(always)]

fn configure_tcr() {

unsafe {

asm!(

"mov x0, #0x10", // T0SZ[5:0]=16: VA size = 2^(64-16) = 2^48 (48-bit VA, TTBR0)

"mov x1, #0x1",

"mov x2, #0x3",

"mov x3, #0x10",

"orr x0, x0, x1, LSL #8", // IRGN0[9:8]=0b01: inner cache = normal WB, read/write allocate

"orr x0, x0, x1, LSL #10", // ORGN0[11:10]=0b01: outer cache = normal WB, read/write allocate

"orr x0, x0, x2, LSL #12", // SH0[13:12]=0b11: inner shareable (coherent across all CPUs)

// TG0[15:14]=0b00 (default): 4KB granule for TTBR0

"orr x0, x0, x3, LSL #16", // T1SZ[21:16]=16: 48-bit VA for TTBR1 (disabled by EPD1)

"orr x0, x0, x1, LSL #23", // EPD1[23]=1: disable TTBR1 walks (no high kernel mapping yet)

"orr x0, x0, x1, LSL #31", // TG1[31:30]=0b10 (bit31=1, bit30=0): 4KB granule for TTBR1

"orr x0, x0, x1, LSL #34", // IPS[34:32]=0b100 (bit34=1): 44-bit PA space (16TB)

"msr tcr_el1, x0",

"isb sy",

options(nostack, nomem, preserves_flags)

);

}

}

And finally we turn on the MMU. As said before, the MMU is turned on when the bit M in the SCTLR_EL1 register is set.

/* SCTLR_ELX bits */

pub const SCTLR_ELX_MMU: usize = 1 << 0; // MMU Enable

#[inline(always)]

fn enable_mmu() {

unsafe {

asm!(

"mrs {tmp}, sctlr_el1",

"orr {tmp}, {tmp}, {mmu_bit}",

"msr sctlr_el1, {tmp}",

"isb sy",

mmu_bit = in(reg) SCTLR_ELX_MMU,

tmp = out(reg) _,

options(nostack, nomem, preserves_flags)

);

}

}

Next Steps

The identity mapping serves as a critical but temporary bridge for the kernel's transition into virtual memory. In the next post, we will dismantle this 1:1 mapping in favor of a full four-level translation walk, enabling 4 KiB page granularity and the move to the "high half" of the virtual address space. This transition will provide the necessary isolation between the kernel and physical hardware, establishing the permanent logical environment for our operating system.

The complete implementation of the concepts discussed in this post can be found in the project's GitHub repository.

References

- Arm Architecture Reference Manual for A-profile architecture

- Learn the architecture - AArch64 memory management Guide Version 1.4

- What Every Programmer Should Know About Memory

- Learn the architecture - Memory Systems, Ordering, and Barriers

- Learn the architecture - AArch64 memory attributes and properties

- We use a non-shareable Data Synchronization Barrier because currently we only have one CPU running. ↩︎