It has been several months since I started integrating Artificial Intelligence (AI) extensively into my daily workflow. What began as a tool for isolated queries has evolved into a core component of my personal projects and professional tasks. During this time, I have witnessed the staggering pace of the AI ecosystem, but one specific moment changed my perspective on where this technology is heading.

The turning point occurred during the complete redesign of this blog. I wanted a more professional architecture: streamlining the layout, removing legacy comment sections, and reorganizing content by tags and categories. After cloning the repository locally, I started to work hand to hand with Claude Code to implement these changes.

The “shock” moment came when I asked for a mockup of the progress. I expected a simple static file or a basic HTML preview. Instead, Claude Code took the initiative: it began spinning up Docker containers to serve the site. When the containers failed to build due to environment mismatches, the AI didn’t ask for help, it investigated the logs, debugged the configuration, fixed the Dockerfiles, and eventually presented a fully functional, containerized mockup.

It went off-script to solve an infrastructure problem I hadn’t even defined. This experience was the catalyst for a deeper realization: we are moving away from simple chatbots and entering the era of autonomous agents.

In this post, I want to share my thoughts on this shift. I will start with a quick recap at how these models actually work to justify why I believe certain professions are facing an inevitable transformation. We will discuss why mechanical tasks are becoming obsolete, why deep domain expertise is more critical now than ever, and how the role of the engineer is evolving from writing code to orchestrating complex agentic workflows.

Quick Recap of Large Language Models Fundamentals

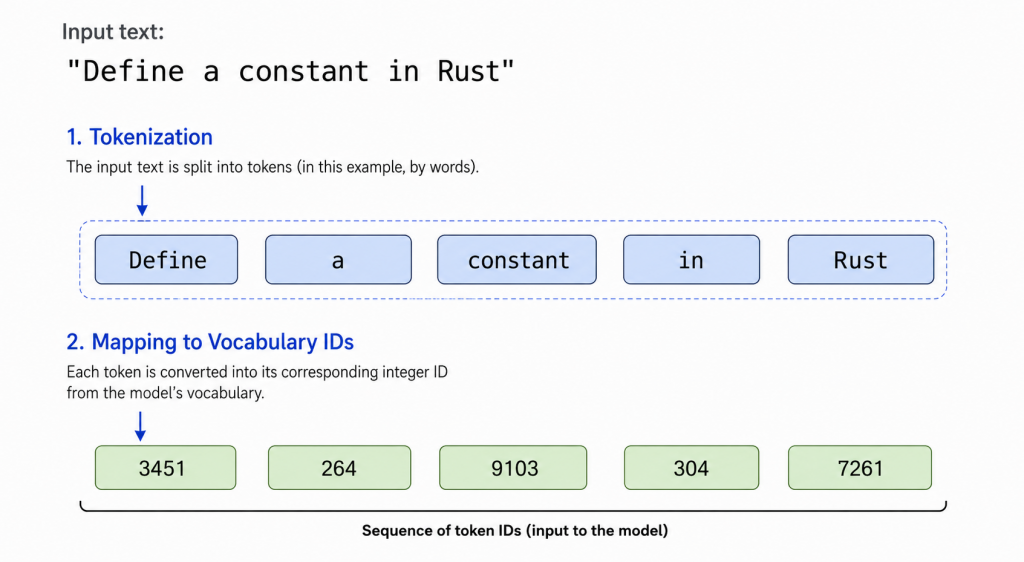

Before diving in, we must define the fundamental unit an AI works with: the Token. In the context of a Large Language Model (LLM), a token is a discrete numerical representation of a text fragment. Depending on the encoding, a token can be a single character, a prefix, or a whole word.

The process of Tokenization transforms human language into a vector of integers. To see how this works in practice, let’s follow a single request through the gears of the model: “Define a constant in Rust”.

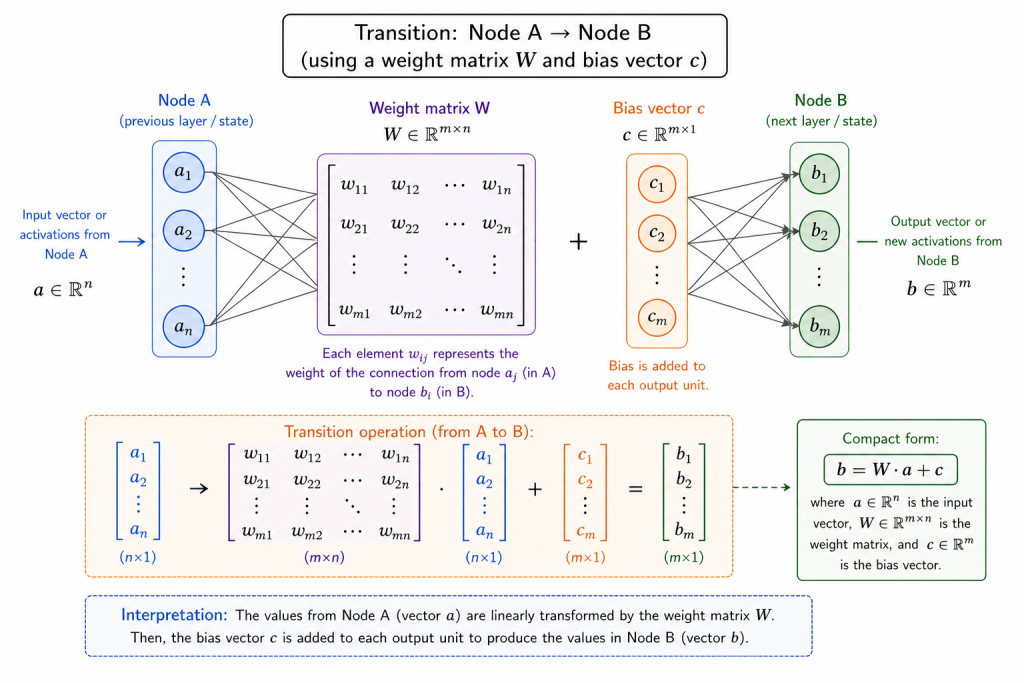

Once the model has tokenized the promt, it passes it through its internal architecture. This is where the LLM acts as a high-dimensional mathematical function. The core of this process is the Weight Matrix \(W\) and the Bias Vector \(c\).

Think of the Weight Matrix as a massive grid of billions of “learned votes” acquired during training, while the Bias Vector acts as a baseline adjustment that allows the model to fine-tune its internal state regardless of the input. Every time the signal moves between layers, it performs a fundamental operation:

$$\mathbf{b} = \mathbf{W} \cdot \mathbf{a} + \mathbf{c}$$

- \(\mathbf{a}\) (Input Vector): The numerical representation of our current context (the prompt).

- \(\mathbf{W}\) (Weight Matrix): The parameters that determine how much influence each part of the prompt has over the final answer.

- \(\mathbf{c}\) (Bias Vector): An additional parameter that shifts the activation to better fit complex patterns.

- \(\mathbf{b}\) (Output Vector): The resulting signal that will lead us to the next token.

In our example, the parameters in \(\mathbf{W}\) that are associated with “Rust” and “constant” will resonate more strongly. They act like a filter that guides the signal towards a specific outcome. The model isn’t “thinking” about the question; it’s performing billions of multiplications to see which direction the signal should take.

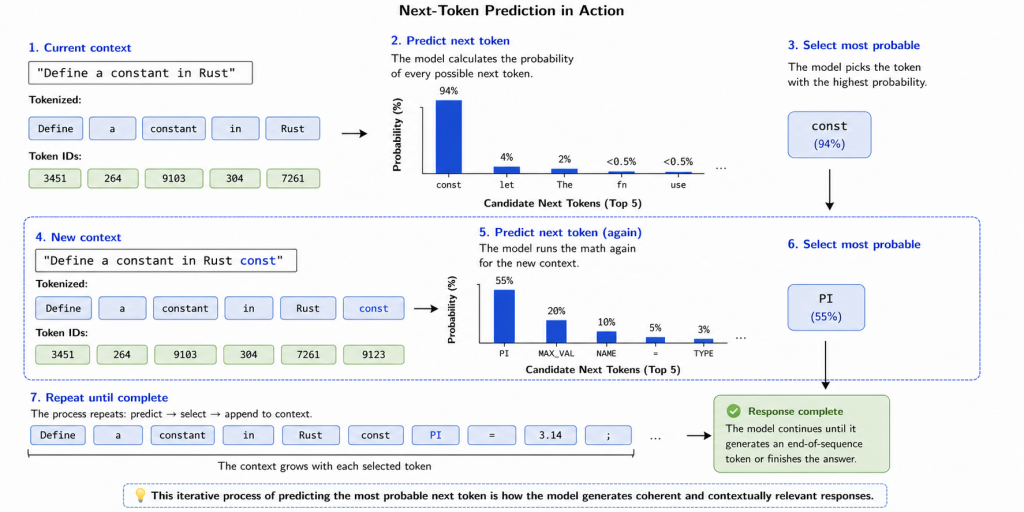

The final step is turning that mathematical result back into language through next-token prediction. Given the context “Define a constant in Rust”, the model calculates a probability distribution for the next possible token.

As shown in the image, the model sees that the most statistically logical way to start the answer is with the word const.

The model chooses const, and then repeats the entire process. Now, the new context is: “Define a constant in Rust. const“. It runs the math again to predict the next token (likely PI or MAX_VAL), and then the next, until the response is complete.

Bringing Concepts to Reality: From Math to Agency

Now that we’ve seen how the engine works, we can address the big question: If an AI is just a stateless mathematical function, how does it feel so “human” and capable? From our recap, we know the model is a black box that takes an input and produces an output. It has no long-term memory. The “magic” of a conversation in Gemini or ChatGPT is actually a clever illusion called the Context Window. Every time you send a new prompt, the entire history of the chat is bundled together and re-sent to the model. It isn’t “remembering” your previous message; it is re-calculating the most probable next token by re-reading the entire conversation every single time.

But memory is only half the story. How does a statistical engine know it should act like a senior engineer and not a medieval poet? This behavior is governed by three invisible layers.

First, the System Prompt. This is a hidden set of instructions that is injected at the very beginning of the context window. It nudges the probability to favor technical, professional responses. When I use Claude Code, the system prompt ensures the “statistical path of least resistance” leads toward clean code and infrastructure logic rather than creative prose.

Second, the model has been aligned through human feedback. This is essentially a specialized training phase where humans rank the model’s outputs, teaching the math to favor helpfulness and instruction-following. This is why the model actually “listens” to the system prompt instead of just drifting off into random internet patterns.

Finally, there is Tool Use. This is the bridge between a text predictor and an active participant. Modern models are trained to emit specific, “special” tokens that trigger external actions. When Claude Code decided to run docker compose up, it didn’t do it out of a desire to see my blog live. It predicted that, given the failure logs in its context, the most statistically logical next step was to trigger a shell-execution tool.

By understanding that AI is a high-dimensional statistical engine guided by these layers of context, constraints, and tools, it becomes clear why deterministic and mechanical tasks are its first victims. If a solution is the most probable path (like fixing a standard Docker mismatch or writing boilerplate code) the model will find it effortlessly. It doesn’t need to think because the answer is already carved into the math of its weights.

The Automation of Deterministic Implementation Tasks

I see a striking parallel between our current moment and the Industrial Revolution of the 18th century. Just as the steam engine replaced the physical repetition of manual labor, LLMs are beginning to replace the mental repetition of deterministic tasks.

At first glance, this sounds alarmist. The idea that a machine can perform your job faster and more accurately suggests a future where high-skilled workers are rendered obsolete. However, I believe the reality is more nuanced: AI is not necessarily replacing expertise, it is replacing mechanical implementation.

This phenomenon is not limited to software. We are seeing a shift across every sector where tasks rely on statistical certainties:

- Software Engineering: Writing boilerplate code, standard CRUD operations, and basic unit tests.

- Legal & Administration: Drafting standard contracts, summarizing long documents, and cross-referencing regulatory compliance.

- Finance & Accounting: Invoice reconciliation, tax calculations based on fixed formulas, and basic financial reporting.

- Architecture & Design: Generating standard floor plans from constraints or creating basic layouts based on established UI/UX patterns.

These tasks require human interaction today only because we lacked a “mental loom” to automate them. But because the probabilistic path for these implementations is so deeply carved into the collective human knowledge (the model’s weights), the effort of performing them manually is becoming a low-value activity.

Just as the 18th-century artisans had to transition from manual weaving to overseeing the machines that did it, we are witnessing the extinction of the “mechanical professional”. If a task is deterministic it is no longer a human value-add. It is simply a candidate for total automation.

The Requirement of Domain Expertise in AI-Assisted Development

If mechanical tasks are handled by LLMs, where does human value reside? What is our role in this new landscape?

I believe we are shifting from being builders to being Architects. Our value now lies in the edges that AI cannot yet reach: creative strategy, edge-case intuition, novel unexpected approaches, etc. In the pre-AI era, a junior developer spent 80% of their time in a “Learning & Applying” loop: studying the problem, trying a fix, failing, and repeating. An expert, however, has already internalized these patterns through years of experience.

In this new paradigm, the expert can short-circuit that loop. These are three reasons why I think deep domain expertise is now more valuable than it was six months ago:

- The Creative Edge: While an LLM is busy predicting the most probable next token, an expert proposes the improbable but brilliant idea. Creativity often involves breaking the statistical path. While we can nudge AI to be creative via parameters like temperature, human imagination still holds the edge in connecting disparate, non-linear concepts that haven’t been mapped in a training set.

- The Sparring Partner Effect: Competing against an AI on pure knowledge is a lost battle. An LLM can synthesize the entire codebase of the Linux kernel or FFmpeg in seconds – a feat no human could achieve in seven lifetimes. Instead of fighting this, we must embrace the AI as a high-speed research tool. By using it as a sparring partner, we can validate our theories and reach solutions that neither the human nor the machine could have found alone.

- Validation vs. Hallucination: A novice using AI is dangerous because they cannot distinguish a brilliant solution from a confident hallucination. An expert provides the final layer of truth. Beyond spotting errors, the expert steers the model by identifying the trade-offs of a proposed solution. This feedback loop forces the AI to pivot, discard weak ideas, and focus its immense computational power on a viable approach.

Amplified Intelligence: A novice using AI is still a novice with a faster shovel. An expert using AI is a visionary with a power plant.

The time saved by automating mechanical tasks isn’t free time, it is strategic time. In a world where everyone can generate code, the winner is the one who knows exactly what needs to be built and why it will work better than the standard solution.

Putting Theory into Practice: My Personal AI Workflow

I don’t use a single AI for everything; I treat them as specialized members of my team, each serving a distinct purpose in my engineering process.

Gemini

Since my core expertise is engineering, I use Gemini to polish my raw drafts. It ensures my technical ideas aren’t lost in poor delivery, helping me maintain a professional tone without losing the technical depth.

Claude Code

I use Claude in two ways:

- The Mentor (The OS case): I am currently developing my own Operating System in Rust. While I have the technical intuition from C and Assembly, kernel architecture is new to me. I don’t ask Claude to build a kernel. I design the logic, code the modules, and then use Claude as a senior validator. I may ask: “I’ve approached this interrupt handler this way; is this optimal? Exists a better pattern in modern kernel design? Why do you think this approach is good/bad? How does the Linux kernel handles this and why?” It bridges the gap between my intuition and the “best practices” it has learned from millions of lines of code.

- The High-Speed Worker (The Dotfiles Case): Recently, I turned my dotfiles into an autonomous, cross-platform installer. Could I have done it? Yes. But it would have involved weeks of tedious manual scripting. By giving precise instructions on file hierarchy, symlink logic, and workflow constraints I directed the AI to do the manual labor. What was a massive backlog item was completed in a handful of prompts.

ChatGPT

For isolated, context-free questions (like deciphering the bits in the cr4 register) ChatGPT is my go-to. It excels at research campaigns: collecting and summarizing academic papers and technical sources, allowing me to filter out the noise and focus on the relevant literature.

The takeaway

The value didn’t come from the AI; it came from the direction I gave. I provided the Why and the How, and the AI provided the Doing. It didn’t replace me; it gave me back months of my life.

Emerging Paradigms: Agentic Workflows and System Orchestration

While the experience with Claude Code blew my mind, I recently encountered a second one that fundamentally changed how I build: the transition from single-prompt interactions to custom Agentic Workflows.

I’ll be honest: I started a new project with low expectations. Based on past experiences where models would ignore instructions or confidently invent solutions, I was “praying for it not to be a disaster”. I expected the typical Question-Response fatigue. What I found instead was a system so stable and efficient; it felt like I had hired a specialized team.

The breakthrough wasn’t just using AI, it was moving away from the “one-model-does-all” approach. Instead of asking for a solution in one go, I designed a structured flow tailored to the project’s needs. By narrowing the scope of each step, the hallucinations virtually disappeared.

- The Global Context: I maintain a central project file that defines the high-level goals and architecture. This ensures the “Main Prompt” always knows the Why and the What.

- Specialized Sub-Agents: I broke the project into sub-tasks (Design, Implementation, Validation) and assigned each to a dedicated agent

- Sequential Handoffs: I removed the uncertainty of dynamic planning. Instead, I defined a clear chain: when Agent A finishes successfully, it triggers Agent B.

By acting as the Orchestrator, I provided the roadmap and the specialized toolbox, and the agents provided the supervised labor. The result? A project that would have taken weeks of back-and-forth chatting was completed with surgical precision. It turns out that when you give an AI a narrow enough scope and a clear place in a sequence, it becomes incredibly reliable.

Adapting to the Strange New Reality

We are living in strange times. There is a palpable feeling of unease as we witness AI performing tasks that, until recently, defined our professional identities. The fear that AI might take our jobs is a common sentiment, but the most important thing we can do right now is observe the reality of this shift and take action.

We must accept that mechanical and deterministic tasks will be handled by AI, whether we like it or not. AI is already faster, and in the near future, it will be undeniably better and more cost-effective for these specific types of work. Fighting against this efficiency is a losing battle. Instead, the path forward is adaptation.

Value in the AI era is shifting away from the doing and toward the knowing. This is why becoming an expert in your field is more critical than ever. By cultivating deep domain knowledge, you provide the creative edge, the critical validation, and the strategic Why that a statistical engine cannot generate alone.

As we look toward the horizon, the Agentic Workflow stands as the current state of the art. It represents our new professional frontier: a world where experts design the flows, set the goals, and orchestrate the agents that build and validate our systems. The AI didn’t replace the engineer; it evolved the role from a manual weaver to a designer of the loom.

The question is no longer if the world is changing, but how fast you are willing to adapt to it.